Current Challenges in Evaluating Performance

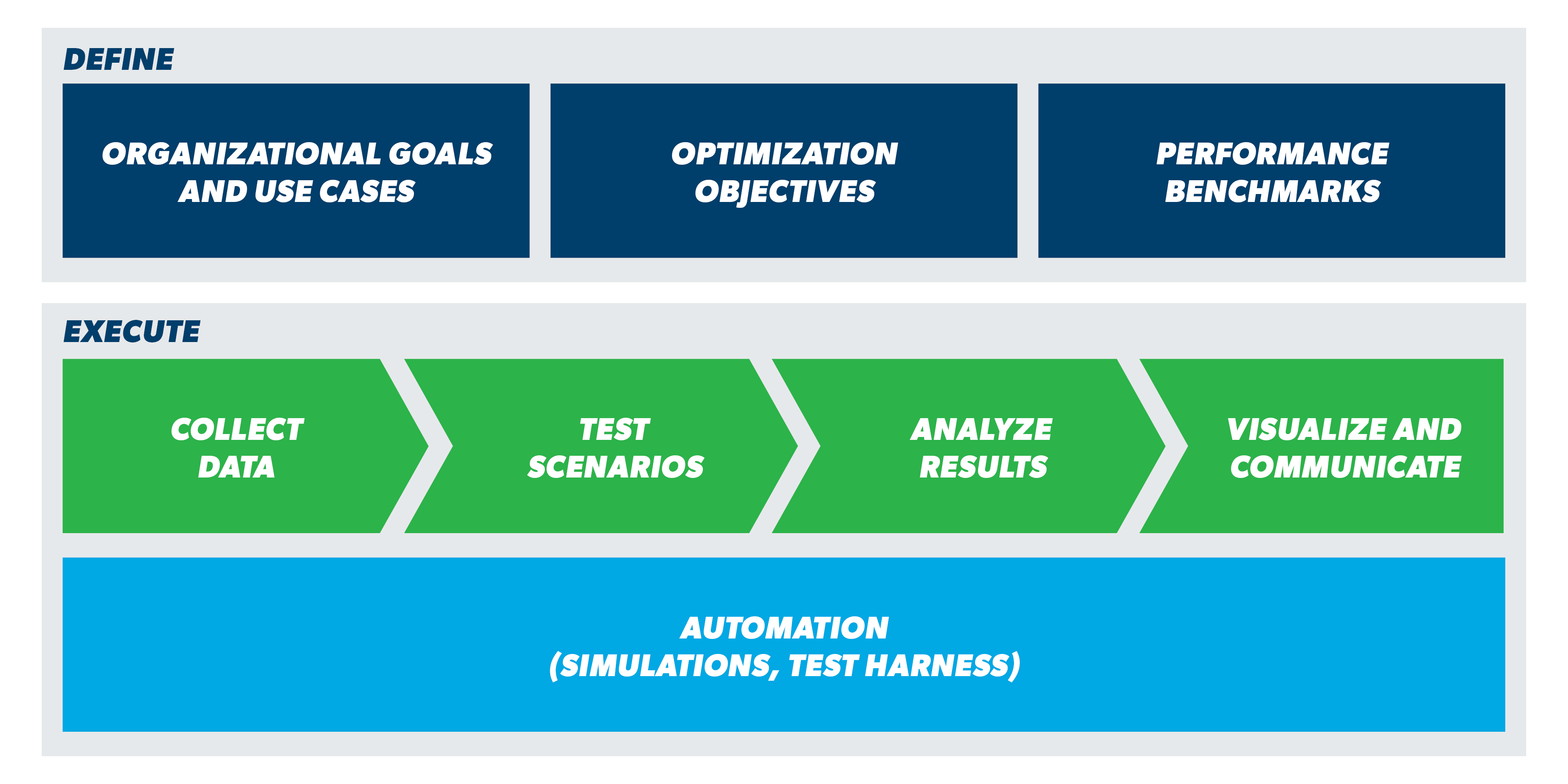

Proprietary vendor algorithms make it difficult for utilities to test why a particular optimization solution state was calculated by the software. Even as the optimization software successfully solves OPF formulations, the effectiveness of these solutions depends on several external factors. Availability and accuracy of as-operated network models, forecast, real time and historical load and generation data, weather data and more can influence results.

From a data modeling perspective, utilities need to have the ability to realistically model regulatory requirements, contractual constraints and consumer behavior such as electric vehicle charging patterns to make test scenarios reflect real world use cases. Special tooling is required to model and simulate advanced scenarios, especially when it comes to modelling future grid state with scaled up DER penetration.